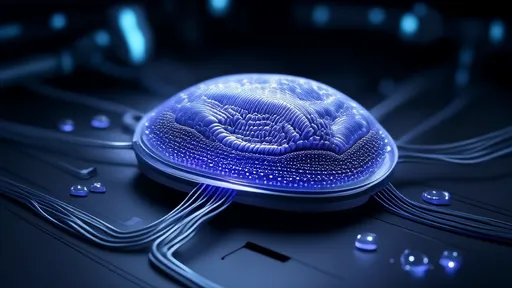

In the rapidly evolving field of artificial intelligence, neuromorphic engineering has emerged as a groundbreaking approach to mimicking biological neural systems. Among its most promising applications is the development of retina-inspired spiking vision chips, which are poised to revolutionize everything from robotics to medical imaging. These bio-inspired sensors don't just capture images - they process visual information in ways that fundamentally differ from conventional cameras, offering unprecedented efficiency and speed.

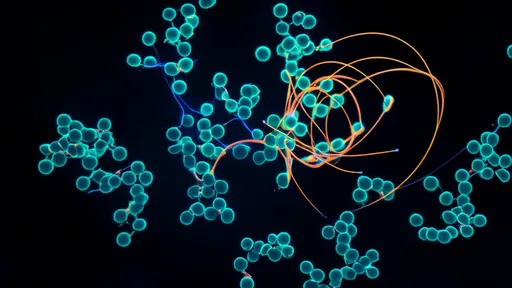

The human retina has long fascinated scientists with its elegant efficiency. Unlike digital cameras that capture frames at fixed intervals, the retina processes visual information continuously through specialized neurons that fire only when they detect meaningful changes in the environment. This event-driven approach eliminates redundant data processing and enables lightning-fast responses to visual stimuli. Neuromorphic engineers have spent decades trying to replicate this biological marvel in silicon.

How Retina-Inspired Chips Differ from Traditional Vision Systems

Traditional computer vision systems rely on sequentially capturing and processing full image frames, regardless of whether the scene has changed. This approach generates massive amounts of redundant data and requires significant computational power for processing. In contrast, neuromorphic vision sensors like the retina-inspired spiking chips output sparse, asynchronous spikes only when pixels detect changes in light intensity. This event-based operation closely mimics the biological retina's functionality.

The implications of this difference are profound. Where a conventional camera might capture 60 identical frames of a static scene, a neuromorphic vision sensor would remain silent until something actually moves or changes. This results in tremendous power savings and reduces data processing requirements by orders of magnitude. Early prototypes have demonstrated power consumption as low as a few milliwatts while maintaining microsecond-level latency - performance metrics that dwarf traditional vision systems.

Breakthroughs in Retinal Chip Architecture

Recent advances in semiconductor technology have enabled researchers to create increasingly sophisticated retina-like chips. One notable development comes from researchers who have successfully replicated the retina's layered structure in silicon. These chips typically contain photodetectors that mimic retinal photoreceptors, connected to networks of artificial neurons that approximate the behavior of retinal ganglion cells.

The most advanced prototypes now incorporate adaptive mechanisms similar to those found in biological vision. These include automatic gain control that adjusts to different lighting conditions, motion-sensitive pathways that prioritize moving objects, and even rudimentary forms of edge detection happening at the sensor level. Some research groups have gone further by implementing the retina's center-surround receptive fields, which enhance contrast detection and motion sensitivity.

Applications Transforming Multiple Industries

The unique properties of retina-inspired vision chips are finding applications across diverse fields. In autonomous vehicles, these sensors provide ultra-low-latency obstacle detection while consuming minimal power. Robotics researchers are integrating them into systems that require rapid visual feedback, such as drone navigation and industrial automation. The medical field sees potential in retinal prosthetics and new diagnostic tools that could interface more naturally with the human visual system.

Perhaps one of the most exciting applications is in always-on surveillance and IoT devices. The combination of low power consumption and event-based operation means these sensors can operate for years on small batteries, only activating higher-level processing when something meaningful occurs. This could enable a new generation of smart sensors that maintain visual awareness without compromising privacy or requiring massive data storage.

Challenges and Future Directions

Despite their promise, retina-inspired neuromorphic chips face several challenges before achieving widespread adoption. The event-based output requires entirely new algorithms and processing pipelines, as traditional computer vision techniques don't translate well to sparse, asynchronous data streams. There's also the challenge of manufacturing consistency - biological systems tolerate variability well, but silicon implementations often require precise calibration.

Looking ahead, researchers are working on several fronts to advance this technology. Some are exploring three-dimensional chip architectures that could better emulate the retina's layered structure. Others are investigating hybrid approaches that combine the best aspects of frame-based and event-based vision. Perhaps most ambitiously, several groups are working toward full-system implementations that would integrate these bio-inspired sensors with neuromorphic processors, creating complete vision systems that operate on principles fundamentally different from conventional approaches.

As the field matures, retina-inspired neuromorphic vision chips may well redefine how machines see and interpret the visual world. By learning from millions of years of biological evolution, engineers are creating sensing technologies that are not just faster and more efficient, but in some ways more intelligent about what deserves attention. The implications for AI, robotics, and human-machine interfaces could be profound - we may be witnessing the dawn of a new era in machine vision.

By /Aug 14, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Aug 14, 2025

By /Aug 14, 2025